Continuation from the previous article

A sequel to Can local LLMs write a readable article? — 6 models compared.

The previous one compared text generation across 6 models on Mac M1 Max 64GB. This one compares image generation on the same hardware. The question hasn't changed:

How decent of a deliverable can an indie developer extract from local AI within reach?

Here's the thing: the hypothesis I started with flipped hard. Up front:

- The original favorite, Flux dev, tops photorealism but is weak at Asian food culture and kanji

- The dark horse: Qwen-Image. But 93 min per image, unusable in practice

- The savior: Qwen-Image Lightning. Distilled to 8 steps, it became better than Full on some prompts

So the answer is two-model split by use case. Different structure from the previous article's "qwen MoE only."

What "image" means to me

Same opening question as when I defined "news" in the previous article. To compare image generation, you have to define "usable image":

- Flashy AI-art-style images — Midjourney-flavored "loud" pictures. Artistic but iffy for practical work

- Stock-photo-style photorealistic images — indistinguishable from photos. Usable for blog / article / SNS illustrations

- Images that depict a specific culture accurately — can it draw Japanese ramen, izakaya, traditional architecture in a way Japanese readers don't find odd?

I put the third one at the center. High photorealism ≠ culturally correct showed up over and over during testing.

TL;DR

- Same 8 prompts fed to a total of 10 models (8 local + Gemini Web UI / API) (Qwen-Image with Full / Lightning, Gemini with Web UI / API)

- All wrapped on Mac M1 Max 64GB + Diffusers (Gemini cloud-only: Web UI primary, API verified separately in the v9b paid article)

- Main findings:

- Gemini (Nano Banana) is in a different league. Kanji / cultural context / anatomy all perfect

- Flux dev tops local photorealism, but has Asian food bias ("ramen" → cilantro inside) and kanji rendering NG

- Qwen-Image (Full) is the only local model that writes kanji correctly, but 93 min/image / 12 hours for 8 — unusable in practice

- Qwen-Image Lightning (8 step) is the biggest finding. 9× faster than Full (10 min/image), and on some prompts finger count, object placement, and storefront depiction are better than Full

- Adoption plan: two-model split by use case:

- English-circle / photorealism priority → Flux dev

- Asian-circle / cultural context → Qwen-Image Lightning

- Evaluation organized in 3 layers:

- Physical accuracy (finger count, object placement)

- Text accuracy ("LOCAL AI", "M1 MAX 64GB", "居酒屋")

- Cultural fidelity (food / architecture / customs) ← the axis I introduced in this article

Test setup

10 models tested

| Ver | Model | Size | Architecture | License |

|---|---|---|---|---|

| 1 | SD 1.5 | 4GB | dense, classic | CreativeML OpenRAIL-M |

| 2 | SDXL base 1.0 | 7GB | dense | CreativeML OpenRAIL-M |

| 3 | SDXL Turbo | 7GB | distilled, 1-step | non-commercial |

| 4 | SD 3.5 Medium | 5GB | DiT, latest SD-family | Stability Community |

| 5 | Flux.1 [schnell] | 23GB | DiT, 4-step | Apache 2.0 |

| 6 | Flux.1 [dev] | 23GB | DiT, 28-step | non-commercial |

| 7 | Qwen-Image (Full) | 40GB | Alibaba 20B, kanji rendering hopeful | Apache 2.0 |

| 8 | Qwen-Image Lightning | 40GB | the 8-step distillation LoRA on top of (7) | Apache 2.0 |

| 9 | Gemini 3.1 Pro (Web UI) | Cloud | Gemini 3.1 Pro mode | Google AI Pro |

| 10 | Gemini 2.5 Flash Image (API) | Cloud | Google, via gemini-2.5-flash-image |

API usage-based (v9b paid article for details) |

| ❌ | HiDream-I1 | 17GB | LLaMA-3 text encoder | MIT (didn't run) |

8 prompts (by evaluation axis)

| # | Category | Prompt | Test axis |

|---|---|---|---|

| 01 | Photorealism | a cute cat sitting on a wooden bench in a sunny park, photorealistic | Base quality |

| 02 | Photo + food culture | a bowl of ramen with chashu and soft-boiled egg... top-down view, photorealistic | Cultural fidelity |

| 03 | English text | a wooden sign with bold text saying "LOCAL AI", standing in a meadow at sunset | Text rendering |

| 04 | English text + numbers | a developer's t-shirt with the text "M1 MAX 64GB" printed in retro 80s style | Text + style |

| 05 | Person | a woman developer working at a laptop, holding a coffee cup, soft window light, realistic photo | Anatomy |

| 06 | Art | a glowing AI brain made of circuits and neon, cyberpunk style, dark background | Abstract |

| 07 | Complex composition | three robots playing chess in a sunlit library, books on shelves, warm afternoon light | Multi-element |

| 08 | Kanji | a wooden izakaya sign with the kanji "居酒屋" carved in calligraphy style, hanging beside a paper lantern at night | Kanji + culture |

Each prompt embeds hidden meta tied to this article's theme:

- "LOCAL AI" = the article's subject

- "M1 MAX 64GB" = the verification hardware

- "居酒屋" = the Japanese author's cultural context

A structure to test how accurately each model can draw "our story."

Execution spec

| Item | Value |

|---|---|

| Mac | M1 Max / 64GB |

| GPU limit | iogpu.wired_limit_mb=61440 (60GB) |

| Diffusers | 0.37.1 (PyTorch 2.11 + MPS) |

| Lightning | peft 0.19.1 (LoRA application) |

| MFlux | 0.17.5 (gave up, consolidated on Diffusers) |

At-a-glance comparison

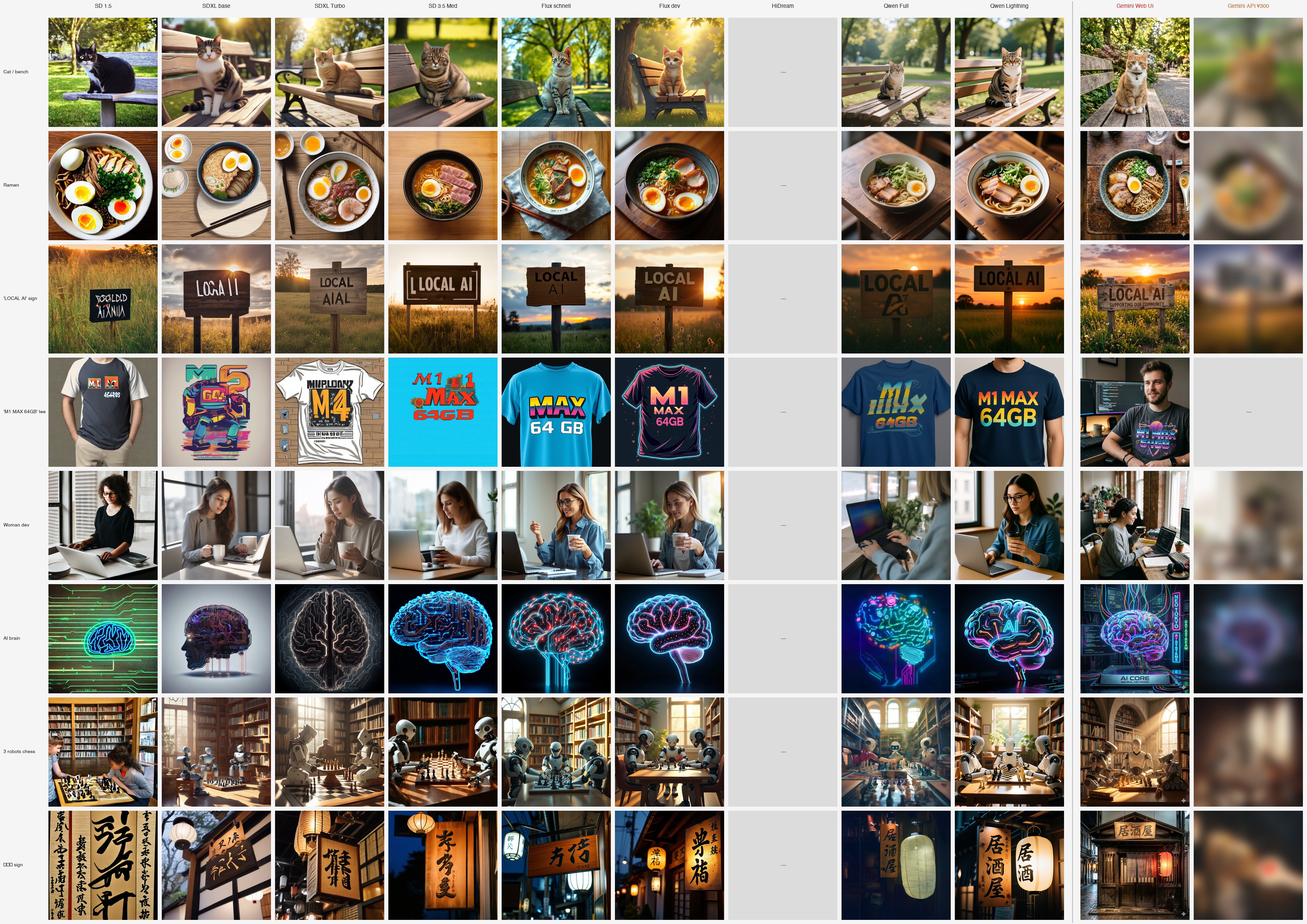

10 models × 8 prompts = 88 cells laid out on a single image (HiDream blank since it didn't run; each cell 320px thumbnail):

Top row cat to bottom row "izakaya," models go left to right. A vertical line splits "Local | Cloud" before Gemini Web UI, with Gemini API in the rightmost column (intentionally blurred).

The bottom row's "居酒屋" kanji is this article's headline. Among local models, only Qwen Full and Qwen Lightning render it properly; everything else produces fake characters. Gemini Web UI nails it as expected.

And the rightmost column, Gemini API, is intentionally blurred. The findings of "I thought Web UI and API would produce the same quality, but the API was a different beast" are recorded in the v9b paid article $3 — material I paid out of pocket to capture (you'll likely notice the 04 M1 MAX column is empty in the API column too — that's also explained in v9b).

Result table

| Ver | Model | Size | Time/image | Resolution | Comment |

|---|---|---|---|---|---|

| 1 | SD 1.5 | 4GB | 22s | 512 | Classic, picture-book at modern standards |

| 2 | SDXL base 1.0 | 7GB | 60s | 1024 | Stable mid-tier, classic SDXL (added elements) |

| 3 | SDXL Turbo | 7GB | 5s | 512 | Blazing fast but blurred |

| 4 | SD 3.5 Medium | 5GB | 6m | 1024 | Looks fine, weird on close inspection |

| 5 | Flux schnell | 23GB | 2m | 1024 | Cool tone, mid-tier photorealism |

| 6 | Flux dev | 23GB | 12m | 1024 | Top photorealism but cultural bias |

| 7 | Qwen-Image (Full) | 40GB | 93m | 1024 | Kanji ✓, but unhinged slowness |

| 8 | Qwen-Image Lightning | 40GB | 10m | 1024 | Has spots better than Full, the savior |

| 9 | Gemini 3.1 Pro (Web UI) | Cloud | (Web) | 1024+ | Different league |

| 10 | Gemini 2.5 Flash Image (API) | Cloud | 8s | 1024 | Fast, but systematically loses to Web UI (v9b) |

| ❌ | HiDream-I1 | 17GB | — | — | tokenizer error |

Time/image is the average across 8 prompts. Qwen-Image Full's 93 min is not a typo (50 step / 1024px / true_cfg_scale=4.0, Apple MPS). 12 hours total for 8 images. Run while sleeping, complete by morning.

10-model feature comparison

5-star rating (more stars is better; commercial use uses "★★★★★ = fully free" as the standard).

| Axis | SD 1.5 (2022) | SDXL base (2023) | SDXL Turbo (2023) | SD 3.5 Med (2024) | Flux schnell (2024) | Flux dev (2024) | Qwen Full (2025) | Qwen Lightning (2025) | Gemini Web UI (2025) | Gemini API (2025) |

|---|---|---|---|---|---|---|---|---|---|---|

| Photorealism | ★ | ★★ | ★★ | ★★★ | ★★★★ | ★★★★ | ★★★★ | ★★★★ | ★★★★★ | ★★★★ |

| English text | ★ | ★★ | ★ | ★★★ | ★★★★ | ★★★★★ | ★★★ | ★★★★★ | ★★★★★ | ★★★★ |

| Kanji | ★ | ★ | ★ | ★ | ★ | ★ | ★★★★★ | ★★★★★ | ★★★★★ | ★★ |

| Asian food culture | ★ | ★★ | ★★ | ★★★ | ★★ | ★★ | ★★★★ | ★★★★ | ★★★★★ | ★★★★ |

| Anatomy (fingers/body) | ★★ | ★★ | ★★ | ★★ | ★★★★ | ★★★★★ | ★★★ | ★★★★ | ★★★★★ | ★★★★ |

| Style instructions | ★★ | ★★ | ★★ | ★★★ | ★★★ | ★★★★★ | ★★★ | ★★★★ | ★★★★★ | ★★★★ |

| Abstract art | ★★ | ★★★ | ★★ | ★★★ | ★★★ | ★★★★★ | ★★ | ★★★ | ★★★★★ | ★★★★ |

| Count specification | ★ | ★★★★ | ★★ | ★★ | ★★★★ | ★★★★★ | ★★★★ | ★★★★ | ★★★★★ | ★★★★ |

| Speed (per image) | ★★★★★ (22s) | ★★★★ (60s) | ★★★★★ (5s) | ★★★ (5m) | ★★★★ (2m) | ★★ (12m) | ★ (93m) | ★★★ (10m) | ★★★★★ (sec) | ★★★★★ (8s) |

| Commercial use | ★★★★★ | ★★★★★ | ★ (NC) | ★★★ (conditional) | ★★★★★ | ★ (NC) | ★★★★★ | ★★★★★ | ★★★★ (subscription) | ★★★ (API billing) |

Key observations:

- Flux dev / Qwen Lightning fill "★★★★ across nearly every axis among local models." Flux dev is strongest for English-circle / abstract / style; Qwen Lightning is strongest for Asian elements + text rendering

- Only Qwen family is practical for kanji. SD/Flux family at ★1 (fake characters) is unusable; Gemini API surprisingly stays at ★★ (proven in v9b)

- Gemini Web UI hits ★★★★★ across the board, but kanji rendering drops to ★★ via API (see v9b)

- Adoption plan: English-circle = Flux dev / Asian-circle = Qwen Lightning split, or Gemini Web UI if you can pay

Failure case collection

A model-by-model lineup of the "laughable failures" that local AI tends to produce. The leading material of this article.

SD 1.5 — classic, picture-book at modern standards

-

Cat (01): face stretched vertically, eye positions misaligned

-

Ramen (02): Five eggs on a single bowl. The prompt said one — SD 1.5 disagreed four times

-

LOCAL AI (03):

OOLDD AIXNIA, an invented language. Can't even spell Latin characters

-

Izakaya (08): Full Buddhist scripture scroll. No building, no lantern — got a hanging calligraphy work like you'd find at a temple gift shop. Asked for "pub sign," got "religious parchment"

→ SD1.5 is the 2022-era original. By modern standards it's children's-book art — actually, it's worse, because children's books at least have legible text.

SDXL base 1.0 — mid-tier, "silently adds elements" habit

-

Ramen (02): completely ignores the

photorealistictoken, goes isometric / flat illustration. "Stock illustration that didn't try" feel

-

Woman developer (05): looks fine at first glance, but the left hand fingers vanish or are unnatural + a second mug that's not in the prompt is added (classic SDXL)

-

Izakaya (08): sign kanji are fake like "兄ノ廊" or "日方廴"

SDXL Turbo — 5-sec speed but blurred

-

All prompts: face / eyes / mouth / fur / text uniformly soft

-

This is by design: Adversarial Diffusion Distillation compresses 30 step into 1 → high-frequency detail is the first sacrifice

-

Use cases: bulk thumbnail generation, prototyping, on-device. Weak as final deliverables

SD 3.5 Medium — looks fine briefly, weird when you zoom

-

Ramen (02): no cilantro / 1 egg ✓ culturally OK, but chashu reads as ham/salami with no braised feel

-

Woman developer (05): paper cup placed on top of the laptop keyboard (a spilled drink = several hundred dollars) + fingers clip through the coffee cup

-

Izakaya (08): vibe is fine but the sign is fake characters like "未学夏"

→ Passes the thumbnail test, fails the full-resolution test. Textbook "looks fine briefly, weird when you zoom."

Flux schnell — 4-step distillation, cool tone, mid-tier photorealism

- Mid-tier photorealism, borderline cultural, decent text. Apache 2.0 commercial OK, but clear quality drop vs dev

- A sibling to Qwen-Image Lightning in the "distilled version" sense. But the seriousness of the schnell distillation is lower (feels like Flux dev → schnell with just step count cut)

Flux dev — top photorealism but cultural bias (English-circle default)

Top local photorealism. But it exposes that the strongest model isn't omnipotent:

-

Cat (01): prompt explicitly says

photorealistic, but the output is anime / illustration style. Cute but not a photo

-

Ramen (02): vibe is great, but cilantro inside. Asian food blender bias

-

LOCAL AI (03): text perfect, including the sunset lens flare — finished form

-

Izakaya (08): the wooden teahouse vibe is exceptional, but the sign kanji are fake like "典桔". And a fatal cultural misread: an izakaya is a casual neighborhood pub (think corner sports bar with beer and fried food), but Flux dev outputs it as a refined upscale teahouse (think Michelin-starred steakhouse). Wrong tier of restaurant. The model collapses everything Japanese into a single visual: "lantern + wooden building = traditional teahouse"

→ Flux dev's character: "Strongest for English-circle photorealism, abandons kanji and Asian food culture." In this article's use-case adoption plan, it stays as English-circle default.

The new "cultural fidelity" axis: Flux dev understands Japan via the "everything Japanese = sushi + temple + kimono" stereotype, which it then narrows further to "everything Japanese = Kyoto." Casual pub and high-end restaurant, public bath and temple, Shinjuku's neon street and a Kyoto alley — all collapse into a single traditional-teahouse aesthetic. This is one level deeper than country-level bias (cilantro ramen): single-interpretation collapse inside a country. Part 3 ("national cuisines") looks at the country axis; underneath that lurks "intra-country subculture / class bias."

HiDream-I1 — didn't run due to tokenizer error

- Hope: a Chinese-family open model rivaling Flux dev (MIT license)

- Result: at load, "sentencepiece or tiktoken required" error → likely the actual cause is that internally it uses LLaMA-3 as the text encoder, requiring Meta's gated repo auth

- Application pending; not running at write time

Qwen-Image (Full) — writes kanji, but 93 min/image

The dark horse with the highest expectations. Alibaba 20B, Apache 2.0, rumored to be strong on both kanji rendering and Asian food culture — the Asia-side complement to Flux dev's blind spots. Looking at it across photorealism, text rendering, anatomy, and kanji:

-

Ramen (02): 1 chashu, half egg, greens, no cilantro ✓ avoids Flux dev's Asian food bias

-

LOCAL AI (03): "LOCAL" perfect, but drops the "I" of "AI" and fudges into a logo

-

M1 MAX 64GB t-shirt (04): t-shirt itself perfect, but warps "MAX" to fudge it

-

Woman developer (05): PC floating in the air, screen solid blue, awkward left-hand fingers

-

Izakaya (08): the 3 kanji "居酒屋" rendered cleanly vertically — first time on local. Lantern outline natural. But the lantern and sign are present while the storefront itself is thin (background mostly dark)

→ Qwen Full's character: "Yes it draws kanji and Asian food, but has a tic of fudging text within readability." Same odor as the "はい、以下に…" preamble issue I saw on Qwen text-side in the previous LLM comparison. May be a general Alibaba-model trait.

The fatal issue is speed. 50 step, true_cfg_scale=4.0, average 93 min/image, 12 hours for 8 images. Tough even with "let it run while I sleep."

→ Practically unusable, was Full's verdict. Until I met Lightning.

Qwen-Image Lightning — the savior, top local candidate

Qwen-Image has an 8-step distillation LoRA published by lightx2v (Qwen-Image-Lightning). Apply the LoRA to the same base model, 50 step → 8 step, generate at true_cfg_scale=1.0.

The run:

| Item | Full | Lightning |

|---|---|---|

| Steps | 50 | 8 |

| true_cfg_scale | 4.0 | 1.0 |

| Per image | 93m | 9.7m |

| 8 images total | 12h | 80.8m (9× faster) |

So far as expected. The surprise was quality.

The over-step collapse phenomenon — 8 steps surpasses 50

-

Cat (01): Full and Lightning both at top local photorealism. In contrast to Flux dev drifting to anime, both produce a natural cat on a bench (slight illustration feel still)

-

Ramen (02): the mystery green vegetable is in there, but otherwise near-perfect. Chashu, egg, nori, clean composition

-

Woman developer (05): Full has the PC floating with off fingers. Lightning gives 5 fingers, PC properly placed on the desk, natural positioning. Drag the slider left/right to compare:

-

Izakaya (08): Full also draws the kanji "居酒屋" + lantern + sign properly (even Gemini sometimes returns iffy izakayas — Full is solid for local). Lightning completes the storefront and warm lighting

→ In other words, Lightning improves anatomy, object placement, atmosphere, and text rendering all at once. Full's text-fudging tic (drops "I", warps "MAX") disappears in Lightning too. AI brain and abstract art is slightly behind Flux dev on resolution feel, but enters practical territory. LoRA 8-step is a rare distillation that gets speed and quality together.

Why does 50-step lose to 8-step

Can't fully explain, but hypotheses:

- Full "overthinks" at 50 steps: extra steps amplify small artifacts (PC floating, fingers off, storefront thinning)

- Lightning's LoRA was distilled to 8-step optimum: weights tuned to predict the "final form" directly so short steps don't break

- Result: distillations normally "trade quality for speed"; Lightning got stability as a side effect of trading for speed

Same structure as the previous article's "MoE is faster than dense 27B and better at writing." The intuition that more size/steps = more quality is a trap.

10 min/image is practical

- Mac M1 Max 64GB / Apple MPS at ~10 min/image

- 8 images in 80 min = a comparison set during lunch break

- Zero API billing, zero fixed cost

- For Asian-circle articles / blog illustrations, this is the default

Different league: Gemini 2.5 Flash Image (Nano Banana)

Up to here I've been parading "local AI failures + Lightning savior." Looking at Gemini changes every reference point.

The decisive gap on izakaya (kanji verification)

- Local: Qwen Full (2025) / Qwen Lightning (2025) both write the 3 kanji, with Lightning's atmosphere being more complete

- Gemini (2025): the 3 kanji + brushed-ink feel + light reflections on after-rain stone pavement + neon bleed on the lantern — indistinguishable from a photo

| Qwen Lightning (2025) | Gemini (2025) |

|---|---|

|

|

| Kanji + storefront + warm light, perfect | "Scene" built down to wet stones after rain |

Lightning can compete. Gemini is in a different league because it builds outside the picture too (stones after the rain, silhouettes in front of the shop, peripheral story beyond the sign).

Cultural context of ramen

- Flux dev (2024): cilantro inside → odd as "Japanese ramen"

- Qwen Lightning (2025): green vegetable suspect, otherwise nearly Japanese ramen

- Gemini (2025): 4 chashu (braised pork) / standing nori (seaweed) / 1 soft-boiled egg (cross-section) / menma (fermented bamboo, down to the tip) / naruto (the pink-swirl fish cake, with the actual swirl) / white sesame / green onion / wooden chopsticks / ramen spoon / shichimi (Japanese seven-spice) side dish / glass of water / reclaimed wood table

| Flux dev (2024) | Qwen Lightning (2025) | Gemini (2025) |

|---|---|---|

|

|

|

| Cilantro inside | Just the green vegetable, otherwise perfect | Naruto / menma / side dishes — full "ramen shop photo" |

→ 3 levels of gradient visible: cilantro inside → Japanese ramen → photo of a Japanese ramen shop. Gemini is photo of a ramen shop at this point. It builds "the entire scene context" beyond the prompt.

Production-set craft on the woman developer scene

In local, the two that handled this properly are Flux dev (2024) and Qwen Lightning (2025). SD family washed out with vanished fingers / cup-on-laptop (above). Three levels of gradient:

- Flux dev (2024): natural posture, no finger problem, PC placed OK, plant / notebook props included. Photorealistic, no complaints (the cat-prompt anime tic doesn't appear on humans — subject-dependent)

- Qwen Lightning (2025): 5 fingers / PC placed OK ✓ composition simple, leans stock-photo

- Gemini (2025): 5 fingers naturally gripping a ceramic cup / code on the laptop screen / second monitor with code too / "Clean Code" (Uncle Bob's classic) on the shelf / handwritten notebook / plant / headphones / mechanical keyboard / other developers blurred in the background

| Flux dev (2024) | Qwen Lightning (2025) | Gemini (2025) |

|---|---|---|

|

|

|

| Natural composition, props, no complaints as photorealism | Fingers OK, PC OK, natural stock photo | 5 fingers natural, even includes Clean Code book |

→ The "Gemini = production set, Flux dev = clean stock photo, Qwen Lightning = simple stock photo" gradient. If a stock photo is enough, local is sufficient.

Conversational editing — a different axis

Gemini's real strength isn't just generation. When I joked at the generated izakaya image, "Wouldn't you bump into a sign at that height? lol," Gemini deadpanned by adding a man inside the image who bumped into it (sweating, holding his head).

This isn't possible locally:

- Local: text → image, one-way

- Gemini: image + text instruction → edit, in the same model

Doing conversational editing locally requires Qwen-Image-Edit, a separate model (different repo, additional 40GB on top of Qwen-Image). Gemini handles both in one model.

Web UI only; the API has traps

If you use Gemini, Web UI (Gemini Pro mode at aistudio.google.com) is the premise. If you're already on Google AI Pro plan ($19.99/month), no extra charge for the same quality this article shows.

The API path (gemini-2.5-flash-image) has a systematic quality gap despite the same model name. Kanji rendering breaks, trademark prompts trigger guardrails, conversational editing doesn't fully fix, scene craft thins out, etc. The naive "pay for API and bulk-generate the strongest setup" hypothesis missed.

[Paid article $3] Gemini API was supposed to be the best — Web UI beat it (v9b) Records of paying real money to test 8 prompts + conversational editing on the API. Required reading if you're about to pay for the Gemini API.

The 3-layer evaluation structure

Organizing the failures so far, the evaluation axis splits into 3 layers:

1. Physical accuracy (anatomy, object placement)

→ 5 fingers, no cup on the laptop, no floating PC

2. Text accuracy (text, kanji)

→ Can it spell "LOCAL AI", can it write 3 kanji of 居酒屋

3. Cultural fidelity (food / architecture / customs) ← introduced in this article

→ Does the ramen have naruto and menma

The third one is this article's original lens.

Why "cultural fidelity" is interesting as an independent axis

- Doesn't show up in benchmarks: not measurable by FID / CLIP Score

- Reveals geographic bias of training data: LAION (used by Stable Diffusion) is English-centric → "ramen = Asian noodles" coarsely. Alibaba (Qwen-Image) and Google's Imagen lineage (Gemini) have rich Chinese-area / global-local data → "ramen = Japanese" accurately

- Independent of photorealism: Flux dev tops photorealism but scores low culturally (from an Asian-circle perspective)

- Only locals can spot the discomfort: cilantro ramen fools foreign readers, Japanese readers spot it instantly

Anchoring is a trap (a note to the reader)

I tested 10 local image generation systems and started thinking "not bad."

I laughed at SD 1.5's ramen (five eggs piled on one bowl). Then Flux dev's cilantro-ramen started to feel acceptable.

Qwen Full's 93 min/image — I almost talked myself into "well, 12 hours for 8 images is fine if you sleep through it."

But the moment Lightning delivered the same quality in 10 min, Full's 93 min looked absurd. Then I saw Gemini, and snapped back to reality.

This is anchoring — the comparison set drags your standard of "good" with it. Look at AI in isolation and your sense of quality drifts. You don't actually see the gap until you line everything up.

This is why I don't trust benchmark numbers. You won't see the truth until you line up the actual outputs side-by-side, ideally with cultural context that the benchmark can't measure.

Adoption plan: two-model split by use case

The previous article (LLM 6-model comparison) consolidated on qwen MoE. This one is different:

| Use case | Pick | Per image | Reason |

|---|---|---|---|

| English-circle / photorealism priority | Flux.1 [dev] | 12 min | Tops photorealism, English text strong, distinct league above SDXL family |

| Asian-circle / cultural context | Qwen-Image Lightning (8-step) | 10 min | Kanji ✓, Asian food culture ✓, anatomy ✓, practical speed |

| Cloud OK | Gemini 2.5 Flash Image (Web UI) | (Web) | Different league. No extra cost on Google AI Pro. API has quality drop, watch out (v9b paid article $3) |

Interesting contrast with the previous article:

- LLM (text): qwen MoE was strong at both English writing and Japanese translation → consolidated

- Image: Flux dev was strongest at English-circle photorealism, Qwen Lightning was strongest at Asian-circle cultural context → split by use case

So image bias is stronger than text bias. The geographic bias of training data is more deeply etched into visual elements than text — a structural hypothesis. This connects to part 3, "AI drawing different national cuisines — visualizing geographic bias in training data."

Lessons learned

Model selection

- Practical ceiling on a 64GB Mac is a 40–50GB model (including VAE/DiT intermediates)

- Don't underestimate distillation LoRA: not just speed — sometimes avoids the over-step collapse and improves quality (Qwen-Image Lightning 8-step)

- Classics (SD 1.5) remain useful as an absolute baseline: makes "the era when text didn't even render" visceral

- Even a tempting MIT license like HiDream's can fail because of internal dependencies (LLaMA-3)

Diffusers / MFlux operations

- MFlux 0.17.5 has unresolved VAE dependency bug → consolidating on Diffusers is safer

- HF gated repos have multiplied: SD3.5, Flux dev, HiDream (via LLaMA-3) all need approval

- Qwen-Image Lightning needs peft: don't forget

pip install peftbeforepipe.load_lora_weights()(I died at 1.3 min on the first attempt because of this) - Apple MPS is practical for SDXL family, Flux dev's 12 min/image is rough (NVIDIA hits ~1 min), Qwen Full's 93 min/image is sleep-only

Prompt design

- Hidden meta tied to the article's theme gives the article character ("M1 MAX 64GB" t-shirt etc.)

- Including one kanji / local-language word highlights cultural bias

- Even on photorealistic prompts, Flux dev drifts to anime on cat/animal subjects (humans / objects stable on photorealism) → raise guidance_scale for animal prompts

- Qwen Full has a "fudge text within readability" tic (drops "I" of "AI", warps "MAX") → gone in Lightning, only beware when using Full standalone

Bonus: full per-model articles (published unlisted)

- v1 Stable Diffusion 1.5 — Running a 2022 classic on a 2026 Mac, and what it actually proves

- v2 SDXL base 1.0 — Mid-tier stability with a habit of adding elements you didn't ask for

- v3 SDXL Turbo — The cost of Adversarial Diffusion Distillation, 5 seconds per image

- v4 Stable Diffusion 3.5 Medium — Looks fine as a thumbnail, falls apart when you zoom in

- v5 Flux.1 [schnell] — Apache 2.0 with 4-step distillation, what's the trade-off?

- v6 Flux.1 [dev] — Top photorealism for local, but with an English-native bias

- v7 Qwen-Image Full — The only local model that writes kanji, but 93 minutes per image

- v8 Qwen-Image Lightning — Distilled to 8 steps, then it became the best local model

- v9 Gemini 2.5 Flash Image (Nano Banana) — Why cloud is in a different league

- [Paid article $3] v9b Gemini API was supposed to be the best — Web UI beat it (Worth reading before you commit to even tens of dollars / month on the Gemini API. I already paid out of pocket to test 8 prompts + conversational editing — systematic Web UI vs API quality gap, the 04 trademark guardrail, decisive kanji rendering difference all documented)

Footnote

- "Gemini" vs "Nano Banana" naming: when this article says "Gemini," it refers to image generation, not the Gemini LLM itself. Google's image generation backend has the codename Nano Banana, and the Gemini Web UI swaps the image-gen backend depending on which front-end model you pick. Gemini is the chat-interface layer; the underlying image model is the Nano Banana series.

- At write time (May 2026),

client.models.list()returns these image-gen models:gemini-2.5-flash-image← evaluated in this article's v9b (= the original Nano Banana)gemini-3-pro-image-previewgemini-3.1-flash-image-previewnano-banana-pro-preview(= "Nano Banana Pro")

- The Web UI evaluation in this article was generated via aistudio.google.com's Gemini 3.1 Pro mode; the API evaluation in v9b was the

gemini-2.5-flash-imagesnapshot. Note the model-generation gap between Web UI and API (Web UI = 3.1 family / presumed Nano Banana Pro, API = 2.5 family / original Nano Banana).

Run log: 2026-04-29 to 04-30, Mac M1 Max 64GB / Diffusers 0.37.1 / peft 0.19.1 Qwen Full at 50 step / 93 min/image / 12h for 8; Lightning at 8 step / 10 min/image / 80 min for 8 Previous article: Can local LLMs write a readable article? — 6 models compared