Can Local LLMs Write Articles Worth Reading? — A 6-Model Comparison

It Started With a Mac Upgrade

I'd been using a final-generation Intel MacBook Pro 16. Fine for daily work, but running multiple VSCode + Claude Code instances while playing background music on YouTube would frequently make things sluggish — typing lag of several seconds, IME freezes, fans roaring, all the classics whenever Chrome's memory hunger and SSD access piled up.

A few years into the Apple Silicon transition, the used market for M1 Max / 64GB had finally settled down, so I'd been watching for a deal. A second-hand listing came up for a brand-new MacBook Pro 16 (M1 Max / 64GB) at a steep discount, and I bought it.

After the switch, the freezes were gone, of course. But that created a new problem — or an "opportunity": "With 64GB of RAM and an M1 Max GPU, what's the best use of it?" A luxurious dilemma.

My everyday work doesn't even use 32GB. Letting the remaining 30GB+ sit idle felt wasteful. That's when I remembered the AI weekly news auto-generation pipeline I'd built for Draft (the article platform I run). I'd been running it on qwen2.5:72b (47GB), but the model was getting old, and I'd been wondering what would happen if I tried newer generations or different vendors.

"With 64GB I could just run six models in sequence and compare the output." That was the starting point.

The question, distilled, is one thing: If you push local LLMs to their limit, can they write something a human actually wants to read?

What I Mean By "News"

Before you can produce "something worth reading," you have to define what counts as "news." I sorted through the candidates:

- A list of RSS headlines — That's a table of contents for news, not news itself.

- Aggregated social media posts — Real-time, but tends to devolve into individual emotion and speculation without numerical backing. Good for trend feel, not trustworthy analysis.

- A self-built data collection pipeline + LLM processing — RSS, stock prices, regulatory info, and funding rounds collected myself, presented with data backing.

I went with the third. But just listing events stops at "organized data." Connecting events together to show what's actually happening is the condition for "news as a reading experience," I think.

For example:

Demand for AI data center construction rises → Infrastructure and energy company stocks rally

If you can produce that level of "connect the dots" analysis, it's valuable to readers. If you can't, it's just a "this week's events list."

I've kept the option open to eventually swap this pipeline over to a commercial API (Claude / GPT-4 etc.). But for now I want to see how far you can go with "a local LLM an individual can reasonably afford." The reason is simple: API billing creates a fixed cost you keep paying whether or not you have readers. With local, once you've bought the hardware, the running cost is essentially electricity. That keeps you nimble during the experimental, low-traffic phase.

TL;DR

- I fed the same AI news data (Phase A/B/C shared) to six open-weight LLMs and had them write only Phase D onward (Headlines / Deep Dives / Stories / Takeaways) for comparison.

- Everything ran on Mac M1 Max 64GB + Ollama. Zero cloud API.

- Conclusion: qwen3.6:35b-a3b-q8_0 MoE (38GB / 10.6 minutes) is the overall winner on "numerical density × speed × Stories causality." The writing carries a sense of authorial enthusiasm.

- Big failure: qwen3-next:80b (50GB MoE) — physical limit on a 64GB Mac, the thinking-model trap.

- Side product: discovered many local-LLM operational pitfalls — MLX variants, Wired roundup articles, thinking mode, and more.

Baseline State and Remaining Issues

The qwen2.5:72b (47GB) pipeline I'd been using was already producing weekly articles at Draft-publishable quality. But three reader-facing dissatisfactions remained:

- Monotonous reading — Headlines → Deep Dives → Takeaways line up, but emphasis doesn't come through.

- Bottom lines escape into abstraction — Section closers default to clichés like "reshaping the industry" or "a new era of..."

- The model generation is old — qwen2.5 is over a year old. Hadn't tried Qwen3.6 / Llama3.3 / Gemma3 etc.

Items 1 and 2 I addressed via prompt improvements (Hook + Editor's Pick, Takeaways converted from imperative to suggestive form, Bottom-line banned-phrase list, etc.). Item 3 is the scope of this experiment.

Pipeline Structure (Phase A〜G)

Each article is generated through the following 7 phases. Each phase has an independent role, and intermediate JSON saved between them allows a "resume" execution that runs only the later stages.

Phase A: Data Collection

- Pulls last week's articles from 9 RSS feeds (Wired AI / TechCrunch AI / The Verge AI / MIT Tech Review / Hugging Face Blog / Google AI Blog / Ars Technica / etc.)

- Each article is body-extracted with trafilatura (HTML → clean text)

- S&P500 stock and volume data from the Trade2 DB on the mini server

- AI-related bills from GovTrack / OpenStates

- Funding round info

Phase B: Theme Extraction

- One LLM call outputs "this week's 3-4 main themes" as JSON

- Each theme has narrative / evidence / market_impact / so_what / key_facts

- Grouping accuracy is improved via "only bundle news from the same industry / same buyer" prompting

Phase C: Theme Deep Research

- For each theme, the LLM asks "what's missing" (C2)

- DuckDuckGo web search retrieves answers (C3)

- Results are merged into enriched analysis (C4)

- Repeats up to 3 rounds until the LLM judges "I have enough"

Phase D: Article Writing ← Model variation point

- D0: Hook + Editor's Pick (one-sentence hook + one must-read of the week)

- D1: Headlines (all news as one-line bullets, opinion pieces excluded)

- D2: Deep Dives (3-4 paragraphs per theme, 4 themes in parallel)

- D3: Stories (writes 🔗 Connecting the Dots if cross-theme connections exist; skipped if weak)

- D4: Takeaways (suggestive bullets, imperative voice forbidden)

Phase E: Self-Review + Revise

- LLM as reviewer checks "duplication / fabrication / generic phrasing / imperative tone"

- Separate LLM call applies the feedback as revisions

Phase F: Final Polish + Publish

- Grammar / tone smoothing + internal-reference removal

- Programmatic removal of a "banned phrases list" ("reshaping the industry", "a new era of", etc.)

- POST to Draft API to publish

Phase G: Japanese Translation (added this round)

- Pass the English article_final.md to the MoE model for Japanese rendering

- Strict adherence to "だ・である" tone, full preservation of proper nouns and numbers

- Average 3.8 minutes per article (detailed below)

Validation Structure

For this experiment, I froze the Phase A/B/C output and ran only Phase D~F across each model. A script called resume_en_from_d.py loads saved JSON and executes from Phase D. This makes it a fair comparison: "same source material, different writers."

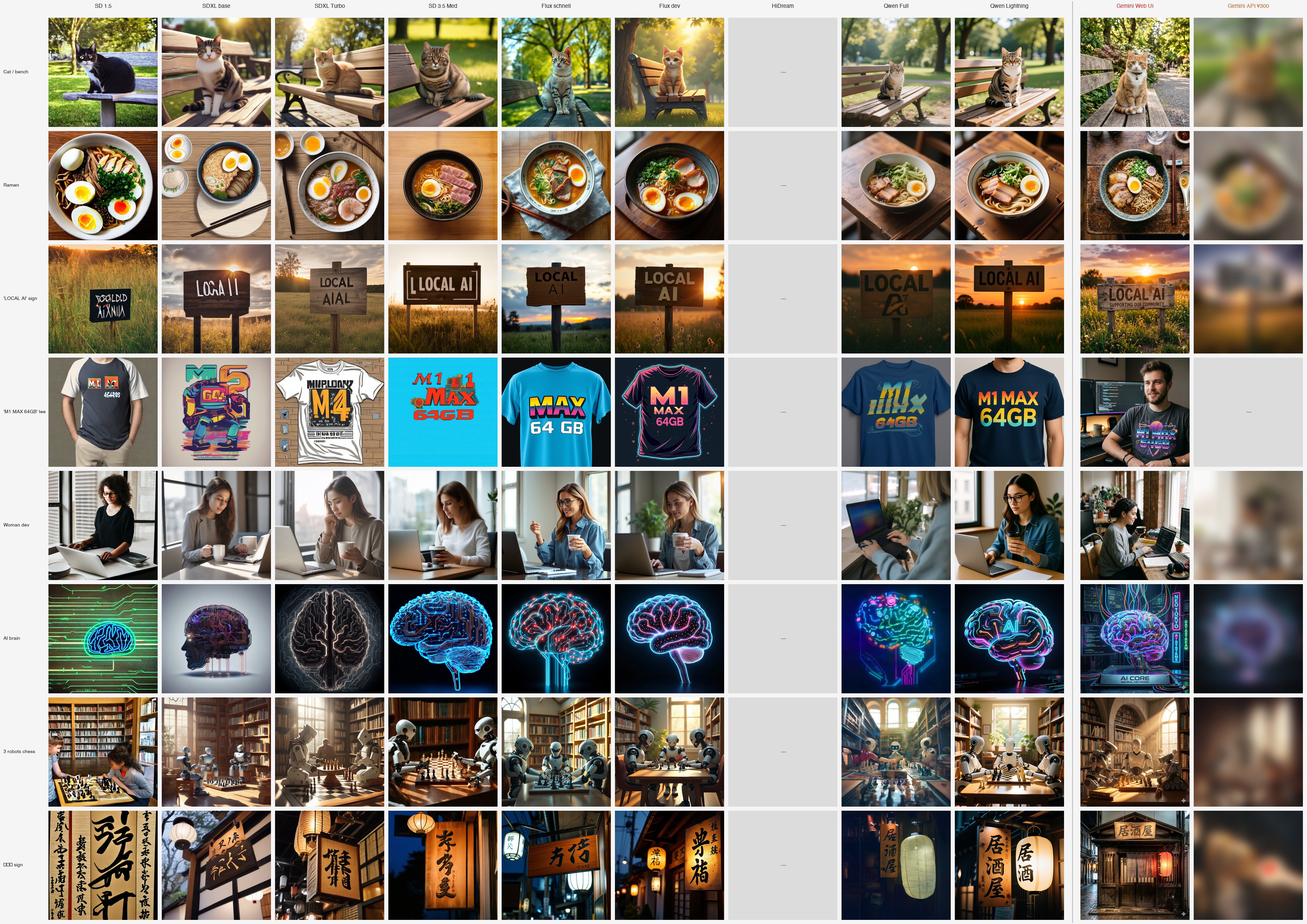

Models Tested

| Ver | Model | Size | Architecture | Pull Source |

|---|---|---|---|---|

| v11 | qwen2.5:72b | 47GB | dense | Ollama |

| v13 | qwen3.6:27b-q8_0 | 30GB | dense Q8 | Ollama |

| v18 | qwen3.6:35b-a3b-q8_0 | 38GB | MoE 35B (active 3B) Q8 | Ollama |

| v14 | llama3.3:70b | 42GB | dense Q4 | Ollama |

| v15 | gemma3:27b | 17GB | dense | Ollama |

| v16 | mistral-small3.2:24b | 15GB | dense | Ollama |

| v17 | deepseek-r1:70b | 42GB | thinking-type | Ollama |

| ❌ v12 | qwen3-next:80b | 50GB | MoE thinking-type | Failed (detailed below) |

Results Summary

| Ver | Model | Size | Runtime | Chars | One-line |

|---|---|---|---|---|---|

| v11 | qwen2.5:72b | 47GB | 50 min | 10,461 | Baseline (old generation) |

| v13 | qwen3.6:27b-q8_0 | 30GB | 27.4 min | 13,633 | MBA-paper voice, careful driver |

| v18 | qwen3.6:35b-a3b-q8_0 | 38GB | 10.6 min | 16,489 | Editor who uses every number, fastest |

| v14 | llama3.3:70b | 42GB | 158.3 min | 13,169 | No 70B dignity, disappointing giant |

| v15 | gemma3:27b | 17GB | 25.3 min | 17,457 | Verbose analyst, quietly inflates numbers |

| v16 | mistral-small3.2:24b | 15GB | 15.6 min | 12,648 | Solid mid-tier |

| v17 | deepseek-r1:70b | 42GB | 239.6 min | 10,686 | Thinking-type, all of Headlines / Phase E/F TIMEOUT |

Numbers Worth Noting

- MoE really is fast: v18 (35B-A3B) has 3B active parameters, so it's 2.6× faster than dense 27B (v13)

- Quantization loss is smaller than expected: Despite the 27B vs 72B size gap between v13 (Q8) and v11 (Q4), v13 won on textual naturalness

- 70B-class needs CPU spillover caution: v14 llama3.3:70b shows 102GB with KV cache (CONTEXT 131072), runs 48% CPU / 52% GPU as the data spills into main memory. Runtime ballooned 3×.

Failure Case 1: The qwen3-next:80b Trap

The model I'd been most excited about, qwen3-next:80b (50GB MoE), ultimately didn't work. This was an important lesson about running 80B-class open-weight LLMs on a 64GB Mac.

Attempt 1: think:False (v12b)

Specified "think": false via Ollama's API. The intent: "don't generate thinking, just emit the final response."

Result: the thinking process flowed straight into the response. The article opened like this:

Okay, let's tackle this. The user wants me to final proofread and output

the corrected full text. But first, I need to understand what exactly

needs to be done. Looking at the user's message, they provided a task:...

22,773 characters of article_final.md, almost entirely the LLM's monologue. qwen3-next's chat template isn't designed to separate thinking with <think> tags, so think:False simply doesn't apply.

Attempt 2: think:True (v12c)

"OK then, enable thinking and let it separate into the thinking field." Switched to "think": true.

Result: macOS Jetsam SIGKILL'd Python.

Specifically:

- qwen3-next:80b model itself: 50GB

- Ollama KV cache (including thinking): +9GB

- GPU using 59GB (right at the edge of the 60GB wired_limit)

- Memory left for OS / Python / Ollama server: 5GB → Pages free 65MB

- macOS Jetsam (the OOM killer equivalent under memory pressure) killed the Python process with exit 144

Running an 80B + thinking model on a 64GB Mac is physically tight. You'd want at least 96GB.

Lessons

- Thinking models inflate KV cache, so plan for the visible model size + 10〜15GB headroom

- The behavior of Ollama's

thinkparameter is model-dependent (qwen3-next vs qwen3.6 are different beasts) - Practical ceiling on a 64GB machine is around 50GB models (KV cache included)

Failure Case 2: The MLX bf16 Trap

"Since I have an M1 Max, surely Apple's MLX bf16 would give the highest precision?" — that was the thought when I tried qwen3.6:27b-mlx-bf16 (55GB).

Attempt 1: Homebrew Ollama 0.20.5

Error: 500 Internal Server Error: mlx runner failed:

MLX not available: failed to load MLX dynamic library

Homebrew 0.20.5 didn't have MLX library.

Attempt 2: Upgrade to Homebrew Ollama 0.21.2

brew upgrade ollama brought in 0.21.2 + mlx + mlx-c. Retry:

panic: mlx: There is no Stream(gpu, 1) in current thread.

at mlx-c-0.6.0/mlx/c/transforms.cpp:73

Bug in mlx-c 0.6.0 caused a panic.

Attempt 3: Switch to the Official Ollama .pkg

The official build bundles a different MLX (0.31.1-23-g38ad257) and didn't panic. But prompt processing was catastrophically slow:

- 90 seconds to process a 14-token prompt (still stuck at 13/14 after 5 minutes)

- 41 minutes elapsed and the "say pong" test never completed

bf16 prioritized precision at the cost of inference speed beyond practical use. Gave up on M1 Max.

Lessons

- MLX bf16 isn't "fast MLX" — it's "precision-first slow MLX"

- Picking a local-LLM benchmark by size alone is a trap

- The official .pkg and Homebrew builds bundle different MLX (even at the same Ollama version)

Failure Case 3: Wired Weekly Roundup Articles

In a Phase D Deep Dive, I fed the Wired AI piece "It Takes 2 Minutes to Hack the EU's New Age-Verification App." The generated Deep Dive wasn't about the EU age-verification hack at all — it was about Section 702 (US wiretap powers) / Meta Ray-Ban / Deepfake.

The cause was simple: that Wired piece is a weekly roundup. The EU hack only occupies a small portion of the opening; the remaining 4,000 characters cram in other topics. The LLM read the whole body and ended up writing about the latter half.

Fix

I wrote a function extract_title_relevant_text() that scans the body paragraph by paragraph and only extracts paragraphs containing title keywords. Specifically:

- H2 detection: detect a short paragraph matching the title (often acting as an H2 header) and grab from there to the next H2

- Keyword paragraph filter: keep paragraphs containing title-derived words; cut off after 2 consecutive non-matching paragraphs

- Fallback: if extraction is too short, return the original opening

This dropped the Section 702 / Meta / Deepfake bleed-through to zero. For pipelines that handle roundup-style sources, this preprocessing is essential.

Failure Case 4: deepseek-r1:70b — Thinking Mode Is a Landmine for Mass Production

The last comparison target was deepseek-r1:70b (a thinking-type model). The expectation: "thinks more deeply, produces deeper insight." The result was the opposite.

What Happened (v17)

Runtime 239.6 minutes (4 hours) for a "completed" run, but the breakdown is grim:

| Phase | Result |

|---|---|

| D0 Hook + Pick | ✓ Got it |

| D1 Headlines | ❌ TIMEOUT (exceeded 30 minutes, empty response) |

| D2 Deep Dives 4 sections | ✓ Each took 15-20 minutes |

| D3 Stories | ✓ |

| D4 Takeaways | ✓ |

| Phase E review | ❌ TIMEOUT |

| Phase E revise | ❌ TIMEOUT |

| Phase F polish | ❌ TIMEOUT |

So the final article_final.md is "Phase D draft passing untouched through Phase E/F" plus "Headlines section missing." Doesn't qualify as a comparison subject.

Cause

deepseek-r1 is thinking-type — generates a long internal thinking process before responding. In this pipeline:

- Headlines is a bulk task summarizing 14 articles each in one line → 14 chains of thought blew through the 30-minute TIMEOUT

- Phase E review reads the full article and audits everything for duplication/fabrication → thinking expanded too much, timed out

- Phase F polish, same

It can manage small tasks like "one paragraph per theme" in deep dives, but on tasks that require "parallel, large, exhaustive" coverage, the thinking chain self-destructs.

The Deep Dive Content Was Actually Good

The Deep Dive sections that did finish were high quality. Bottom lines were specific:

Cerebras's IPO filing and multi-billion dollar deals with AWS and OpenAI position it as a formidable competitor to Nvidia in the high-performance AI hardware market.

Hugging Face's Nemotron OCR v2, trained on 12 million synthetic images, reduces NED scores for non-English languages to as low as 0.035, demonstrating the transformative potential of synthetic data in multilingual OCR.

Numerical use is at v18's level, logical structure at v13's level. If Headlines and Phase E/F had worked, it could have made the top tier.

Lessons

- Thinking-type LLMs are bad at "parallel bulk tasks". Headlines-style "summarize N items in one line each at once" is fatally heavy

- Stretching TIMEOUT from 30 min to 2 hours might make it work, but a pipeline that takes 8 hours per article isn't realistic

- If you use deepseek-r1, you have to design tasks to "1 call = 1 theme deep-dive" granularity. Not suited to a general-purpose article generation pipeline like this

- Speed ratio = v18 (10.6 min) vs v17 (240 min) = about 23×. Not a production option.

For this pipeline, deepseek-r1 is firmly out.

5-Model Feature Comparison Table

5-tier rating (more ★ = better; for "fabrication risk" only, more ★ = lower risk).

| Item | v13 qwen3.6 dense | v18 qwen3.6 MoE | v14 llama3.3 70B | v15 gemma3 27B | v16 mistral-small3.2 24B |

|---|---|---|---|---|---|

| Size / Speed | ★★★ | ★★★★★ | ★ | ★★★ | ★★★★ |

| Numerical accuracy | ★★★★ | ★★★★★ | ★★★ | ★★★★ | ★★★★ |

| Stylistic naturalness | ★★★★★ | ★★★★ | ★★ | ★★★★ | ★★★ |

| Logical structure | ★★★★★ | ★★★★★ | ★★ | ★★★★ | ★★★ |

| Hook | ★★★★★ | ★★★★ | ★ | ★★ | ★★★ |

| Bottom-line specificity | ★★★★ | ★★★★★ | ★★★ | ★★★★★ | ★★★ |

| Fabrication risk (low) | ★★★★★ | ★★★★★ | ★★★ | ★★ | ★★★★ |

| Translatability | ★★★★★ | ★★★★ | ★★★★ | ★★★ | ★★★★ |

Use-Case Rankings

| Use case | 1st | 2nd | 3rd |

|---|---|---|---|

| Want to read it as prose | v18 | v13 | v15 |

| Want it dense with numbers | v18 | v15 | v16 |

| Speed-first (mass production) | v18 (10.6 min) | v16 (15.6 min) | v15 (25.3 min) |

| Memory-conscious / lightweight | v16 (15GB) | v15 (17GB) | v13 (30GB) |

Per-Model Quirks and Observations

v13 qwen3.6:27b-q8_0 — MBA-Paper Voice

- Each Deep Dive is built on the 3-step "fact → structural driver → strategic implication"

- Numbers strictly follow source data. Doesn't invent unknown numbers; substitutes "high throughput", "significantly reducing", etc.

- Deliberately omits the Stories section. It's optional in the prompt, so it makes the call not to force it

- Slightly stiff voice, but translates most naturally

- Quote:

This direct confrontation with Nvidia's dominance is further evidenced by...

v18 qwen3.6:35b-a3b-q8_0 (MoE) — Editor Who Uses Every Number

- Best at using numbers: deploys

$510M / 76% / $237.8M / non-GAAP $75.7M lossin full - Connecting the Dots (Stories) causality is more structural than other models

- Thanks to MoE 3B active, 10.6 minutes — astonishing speed (1/3 of dense 27B v13)

- Quote:

generated $510 million in revenue in 2025, a nearly 76% increase from the prior year

v14 llama3.3:70b — Zero 70B Dignity

- The largest 70B model, but grammar mistakes remain. Quote:

Cerebras' IPO filing and its $23 billion valuation **the company to** further challenge Nvidia's(verb missing) - Hook is extremely short: just

Cerebras files for IPO amid $15 million cryptocurrency heist. - Weak inter-paragraph connections; doesn't explain relationships between themes

- The 158.3-minute runtime came from CPU/GPU spillover (KV cache exceeding VRAM, falling into main memory) — 3× balloon

- A textbook case for "size doesn't equal quality"

v15 gemma3:27b — Verbose Analyst, Inflates Numbers

- Most verbose (17,457 chars). Aggressive enough to make specific predictions

- But the highest fabrication risk. Examples:

App releases surged 60% year-over-year in **Q1 2025**← source data is Q1 2026the EU will likely mandate independent security audits ... **by Q1 2027**← stating a prediction as if fact$2.2 billion in Series G and H funding rounds← Series G $1.1B + Series H $1B summed; that combined figure isn't in the source

- 17GB / lightweight, but fact-checking is mandatory before publishing

v16 mistral-small3.2:24b — Solid Mid-Tier

- 15GB / 15.6 min, lightest and fastest

- Content is unobjectionable, low fabrication risk

- Editor-meta leakage at the top:

"Here is the corrected full text with the requested edits:"shows up at the start of the article - Occasional syntax breakdowns:

Anthropic's cybersecurity model could for secure AI applications(verb missing) - With a post-processing step to strip the leading meta, it's fine for lightweight use

Adoption: qwen3.6:35b-a3b-q8_0 (MoE) Across the Board

For both English writing and Japanese translation, going with qwen3.6:35b-a3b-q8_0 (MoE) as the single model.

| Stage | Model | Size | Speed |

|---|---|---|---|

| English article generation | qwen3.6:35b-a3b-q8_0 (MoE) | 38GB | 10.6 min/article |

| Japanese translation | qwen3.6:35b-a3b-q8_0 (MoE) | same | 3.8 min/article |

Three Reasons MoE Won

I started thinking v13 dense's "MBA-paper-style careful driving" was the right answer for prose. But after reading both through to the final form, the conclusion flipped. MoE has more of a "human writing with conviction" feel.

-

Doesn't dodge numbers v13 hedges with

significant majorityandsubstantial portion, ducking unknown numbers conservatively. v18 fires off$510M,76% growth,$237.8M net income,non-GAAP $75.7M loss,12 million synthetic images,34.7 pages/sec,NED 0.035–0.069— all in. From the same source, v18's information density is clearly higher. -

Stories (🔗 Connecting the Dots) has actual causality v13 chose to omit this section entirely (it's optional in the prompt). v18 lays out a 3-theme causal chain: "surging hardware investment → rising dependence on security infrastructure to protect those compute assets → market expectation around Anthropic's cybersecurity model." Readers shift from "huh" to "I see."

-

2.5× faster v13 27 min vs v18 10.6 min. Even on a weekly cadence, the headroom for "want to publish more" is different.

MoE Wins on Translation Too

The same MoE handles translation. In an experiment translating v13's English article into Japanese with both models:

- dense compresses numbers ("12 million" → "数百万", losing the figure)

- MoE preserves all numbers (5億1,000万ドル / 1,200万枚 / NED 0.035-0.069 — all source-faithful)

- MoE is 2.6× faster (dense 9.8 min vs MoE 3.8 min)

English writing and translation both running on the same model keeps operations simple. Model swap cost and memory occupancy stay at one model's worth.

Dense's Polish vs MoE's Heat

What I thought was dense's only advantage — "stylistic polish" — turned out to lose to the persuasiveness of arguments backed by numbers when read alongside MoE. Less softness of tone, more data-driven heat. The MoE 35B active 3B architecture is designed to "preserve quality at the parameter scale while accelerating inference," and it turned out to be especially well-matched to news article generation, which demands "logical structure + numerical accuracy + speed."

Use Cases (Reference)

| Use case | Pick | Reason |

|---|---|---|

| Want to read it as prose | v18 qwen3.6 MoE | Number density + causality + speed; you feel the writer's heat |

| Stylistic polish first | v13 qwen3.6 dense | MBA-paper voice, safe via hedging but less information |

| Lightweight / hardware-constrained | v16 mistral-small3.2 | 15GB / 15.6 min, assuming a post-processing script |

Models Not Adopted

- v14 llama3.3:70b — Mediocre across size, time, and quality (grammar mistakes remain even at 70B)

- v15 gemma3:27b — Fabrication risk is too high; fact-checking labor erases the savings ("Q1 2025" vs "Q1 2026" mistakes etc.)

- v16 mistral-small3.2:24b — Editor-meta leakage makes it sub-production ("Here is the corrected text..." at the top)

- v17 deepseek-r1:70b — Thinking-type mismatched with the pipeline structure (Headlines / Phase E/F all TIMEOUT)

The biggest takeaway is that "size," "vendor," and "generation" guarantee none of the quality. You can't pick from benchmark numbers alone — you have to actually run the same data through and read them side by side. I also got to experience first-hand that the relatively new MoE architecture can flip dense for the right use case.

Lessons Summary

Model Selection

- Practical ceiling on a 64GB Mac is around 50GB models (KV cache included)

- 70B+ thinking models are heavy on a 64GB machine (96GB recommended)

- MoE speed is at the active-parameter level. 35B-A3B inference runs at roughly 3B speed

- Q8 quantization loss is small. No need to insist on bf16

Ollama Ops

- Homebrew and official .pkg builds bundle different libraries

think:True/Falsebehavior is model-dependent (qwen3-next is broken both ways; qwen3.6 works with False)- When swapping models, explicit

ollama stopis needed or memory leaks iogpu.wired_limit_mb=61440extends M1 Max GPU memory cap to 60GB (resets on reboot)

News Article Generation Pipeline

- Wired/Verge weekly roundup articles mix unrelated topics in the body → preprocessing to extract title-related paragraphs is mandatory

- Phase B narrative tends to compress specific facts → carrying important numbers in a

key_factsfield is effective - Confirm sourcing before using "partners with" in Headlines (LLMs jump to "partnership" too easily)

- Without a Bottom-line banned-phrase list, "reshaping the industry" / "a new era of" run rampant

- Imperative-voice Takeaways ("Watch X" / "Evaluate Y") translate into preachy "〜せよ" in Japanese → force "observation" / "implication" form

- Without "do not round numbers" explicitly in the translation prompt, dense models compress "12 million" into "数百万"

Side Note: Built an Unlisted Publishing Feature for Draft

For readers who want to read each model's full article, I posted them as unlisted on Draft with URLs below. They don't appear in the listing — only people who know the URL can read them.

Draft (the platform I run) didn't have this "unlisted" feature, so I added it to the server + CLI for this comparison article. Added 'unlisted' to the status enum; the listing query was already status='published'-only, so no extra exclusion logic needed. Article detail display blocks via if status in ('draft','pending_review','blocked') → unlisted isn't on that list, so it falls into the "anyone can view" bucket automatically. Two-file change, minimal.

CLI side, added a draft publish --unlisted flag.

draft publish # Normal publish (appears in listing)

draft publish --unlisted # Unlisted (hidden from listing, direct URL only)

Each model's full article (English + Japanese):

- v13 qwen3.6:27b-q8_0 (dense): English / 日本語

- v18 qwen3.6:35b-a3b-q8_0 (MoE) ← Adopted: English / 日本語

- v14 llama3.3:70b: English / 日本語

- v15 gemma3:27b: English / 日本語

- v16 mistral-small3.2:24b: English / 日本語

- v17 deepseek-r1:70b (partial-failure reference): English / 日本語

Work log: 2026-04-25 〜 2026-04-26, Mac M1 Max 64GB / Ollama 0.21.2 official build